Learning has a measurement problem. It’s hard to measure the impact of L&D… so hard, in fact, that some companies have stopped even trying to measure it.

As L&D professionals, we inherently understand that learning helps us grow our people. That can lead us into the trap of simply trusting that what we’re doing works without ever collecting the specific data to back it up.

Of course, vanity metrics or ‘happy sheets’ might tick the boxes for our stakeholders (‘Look! 83% of learners enjoyed this workshop – it must be great!’), but they tell us absolutely nothing about how well our learners are doing in their jobs, and how our L&D initiatives have impacted performance.

We’re long overdue an overhaul of the way we measure learning ROI – in fact, it’s key if we’re ever going to shift the perception of L&D teams from order takers to strategic business partners. So where are we going wrong, and how can we fix it?

What is moving the needle?

Organisations worldwide spend an average of £1,037 per employee on L&D each year, yet struggle to determine whether this expenditure on training programs actually translates into real-world skill application and a good return on investment.

If we don’t know what’s moving the needle, how can we design better learning programmes? And how can we prove that what we do matters to the wider organisation? Completion data, surveys and self-assessments are all nice to have, but there’s minimal focus on measurable improvement – and isn’t that the whole point of what we do?

Only 36% of L&D practitioners are assessing specific metrics related to the effectiveness of learning programmes.

- Raconteur

Revisiting the Kirkpatrick model

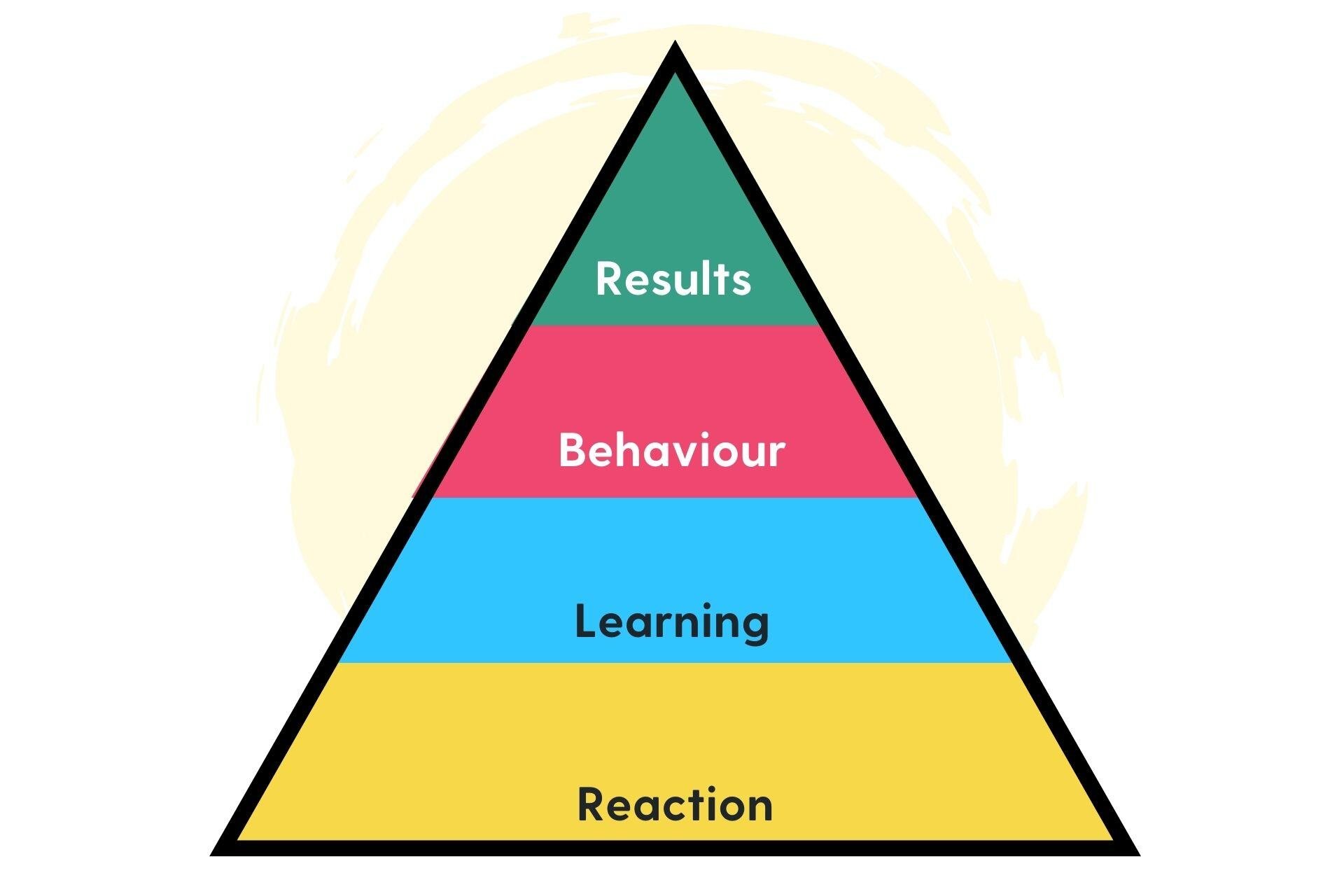

The Kirkpatrick model is a popular framework for evaluating the impact and effectiveness of learning programmes. It breaks the evaluation down into four levels:

- Level 1: Reaction – The immediate reactions of learners, such as their enjoyment and engagement with the learning

- Level 2: Learning – Whether or not learners have gained the intended knowledge, skills and behaviours

- Level 3: Behaviour – Whether or not learners are applying their new knowledge and skills on the job

- Level 4: Results – The impact of the learning programme on business outcomes, such as costs, performance and productivity

Too often, learning teams will collect data for level 1 and call it a day. Those ‘happy sheets’ passed around after a workshop or the 1-10 learner ratings at the end of an elearning course might make us feel good, but they don’t really prove anything in terms of real, measurable ROI.

Level 2 is usually measured immediately after the learning has taken place, perhaps through a quiz or assessment. It’s useful for L&D to understand whether or not the learners understood the learning content, but again, it doesn’t help us understand the full ROI of the programme.

Level 3 and 4 is where the most useful data tends to live, but these levels are the least likely to be measured. Both behaviour change and business results must be measured further down the line to ensure the long-term impact of the learning, but L&D teams have often moved onto the next thing, meaning we don’t dig deeper into the true behavioural and business impact of our learning programmes.

So what’s the problem with the way we’re approaching learning ROI today?

The problem with learning ROI measurement

96% of L&D professionals want to better understand the impact of their activities, but only 22% are actively working to improve their data gathering and analysis methods.

- Raconteur

The way we typically measure learning ROI is flawed. We spend too much time measuring the things that, in the grand scheme of things, don’t really matter, and not enough time (if any) digging into the data that helps us prove our value to the business, such as the impact of learning on the bottom line.

Let’s take a look at some of the problems with the current approach to learning ROI measurement.

1. Overemphasis on memory

Quizzes and multiple-choice questions check knowledge retention, but do little to evaluate practical skills or problem-solving ability. Just because one employee is better at memorising terminology than another, that doesn’t necessarily mean they will be better at applying new skills in the workplace.

2. Not enough hands-on assessments

Skills development requires real-world application. Tasks like coding, writing and design demand practice, yet many learning platforms lack the tools to assess real execution. Without hands-on evaluation, it’s difficult to track progress in skills development.

3. One-size-fits-all learning paths

It’s no secret that one size doesn’t fit all when it comes to learning. Standardised assessments fail to adapt to individual growth, interests and experience. Employees develop at different rates, which rigid learning structures can’t take into account. Without adaptive learning mechanisms, skill progression goes unmeasured, and ends up poorly understood across the board.

4. Static data and limited integration

LMS signups and course completion rates are classic vanity metrics, offering a very narrow (and not very useful) view of learning. Just because a learner has completed a course or read a training guide, this doesn’t mean they gained the desired skills, or can apply them in the workplace. All we know is that they reached the end of the elearning course, watched the video or scrolled through the PDF.

5. Poor measurement of soft skills

Soft skills like communication, leadership or empathy require observation, peer feedback or real-world project reviews – they can’t be ‘measured’ or assessed using a traditional test. A traditional LMS will rarely incorporate these measurement types, making it difficult to assess interpersonal and strategic thinking skills.

6. Manual and biased processes

On the subject of observations and peer reviews, these assessment types can be influenced by personal biases or limited observation windows, making them prone to inconsistency, inaccuracy and the subjective view of the assessor.

7. Lack of continuous feedback

Most learning assessments provide a one-time snapshot rather than an ongoing evaluation of skills. Without continuous feedback, employees miss opportunities to refine and reinforce their skills over time, while managers only have one data point from one moment in time to assess an employee’s understanding of material.

8. Challenges in performance-based evaluation

Simulations and project work are good ways to understand an employee’s ability level, but they can be tricky to implement at scale. Many learning platforms struggle to support these more in-depth assessment types, limiting the ability to assess true competency.

9. Static and incomplete skill profiles

Without a dynamic, real-time review, skill profiles are ineffective for succession planning, building career paths or shaping development initiatives. After all, would you want your entire career to ride on a single quiz you squeezed in between meetings on a busy Tuesday morning?

Over the coming weeks, we’re going to be digging deeper into why ROI is broken in learning, and what we’re planning on doing to fix it. We can’t say too much just yet, but what we can say is that we can guarantee you’ve never seen anything like this before…